I've been thinking about the intersection of fiction writing and the rise of large language model (LLMs) usage for a few months now. Every time I sat down to work on a blog post, however, I kept sticking on the prohibitionist mindsets of many of the publishing industry's gatekeepers, some of them quite justified.

Case in point: in February 2023, Clarkesworld Magazine, a leading SciFi and Fantasy short-form fiction magazine, was so overwhelmed by AI-generated stories that the editor-in-chief, Neil Clarke, closed submissions. Their submissions page now includes a notice that the editors "will not consider any submissions translated, written, developed, or assisted by [AI writing] tools."

Asimov's and Analog, two other well-known SciFi short-form magazines, have also banned generative-AI submissions.

More recently, the Science Fiction & Fantasy Writers Association (SFWA) issued a statement updating their policy for submissions to the Nebula Awards® to include zero tolerance language. As in, any use of AI must be declared by the author or publisher at the time of submission to the award, and those stories then become ineligible for consideration.

Given this, now seems like a good time to discuss what everyone -- publishers, editors, authors, and readers -- gets wrong about fiction and AI.

AI Slop

Leaving aside how ironic it is for Science Fiction advocates to effect a total ban on a particular technology, let's acknowledge the elephant in the room: AI slop has become a real problem.

Whenever I visit Facebook, my feed is filled with AI-generated posts, many from legitimate entities (politicians, for example), but many more from people, groups, and organizations I've never heard of.

These posts are easy to spot for anyone with even the smallest experience using ChatGPT (and these posts are almost always generated by ChatGPT). Aside from the tendency to reduce everything into bullet points, constructions like "it's not this, it's that" and "the room smelled like floor cleaner and desperation" are dead giveaways. Chatbots love to incorporate meaningless profundities into their responses.[1]

Word-salad junk in your social media feed is relatively easy to ignore. When it pops up in fiction, however, it becomes insulting.

Take, for example, my current reading material, a relatively long series by a particular author. It's obvious (to me, anyway) that they're using a combination of ChatGPT, Claude, and possibly another LLM chatbot to write their fiction for them.

What's worse is authors who leave prompts and responses in their published fiction, as two Romantasy authors did earlier this year.

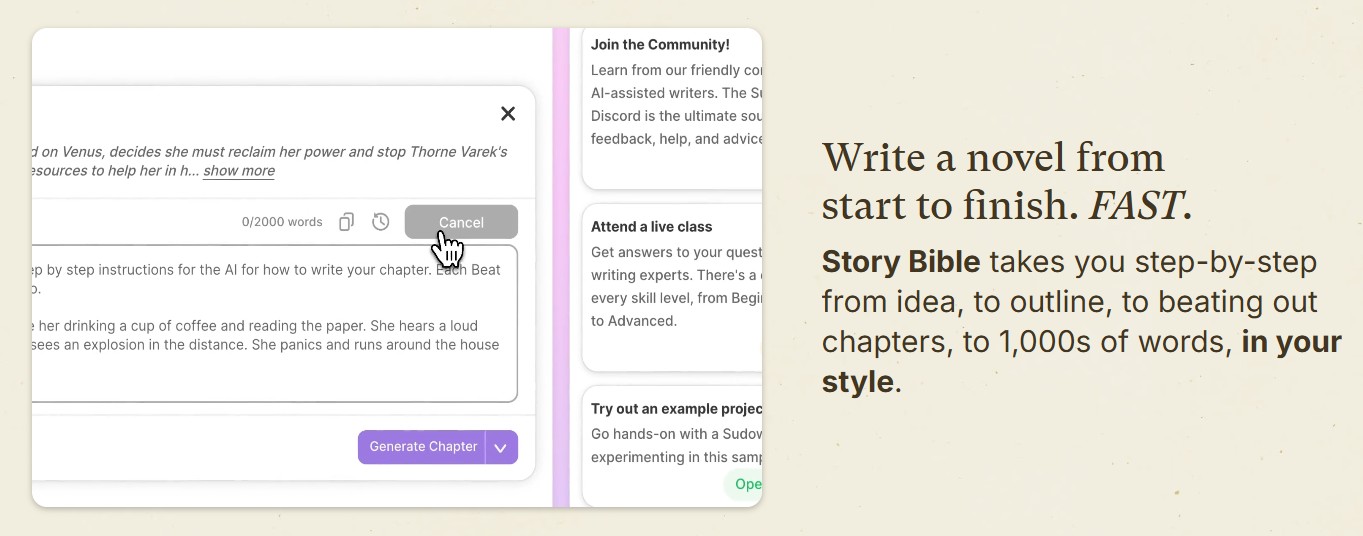

Everyone is sounding the alarm about "AI slop" being on the rise in fiction, as if it's only now becoming a problem when it's been a problem for a while. Author Joanna Penn has been discussing the changing face of AI tools for writers on her podcast, The Creative Penn, for roughly a decade.[2] Sudowrite, a generative AI, novel-writing tool, was released to the public in 2021, though its Story Engine didn't come out until May 2023.

And yes, Sudowrite isn't just an assistive tool. It can do everything from outlining a novel to creating character arcs to writing the prose. The creator, James Yu, has openly stated that authors can use it to "write a novel in just a few days."

The upshot here is that I don't blame publishers and editors for creating zero tolerance policies. I'm just not sure whether they've considered how those policies will impact author behavior.

A Slippery Slope

When I saw the notice of SFWA's policy changes, I took a moment to think about the implications. It's one thing to ban the use of generative AI chatbots the way Clarkesworld does. It’s another thing entirely to create policies functionally prohibiting the use of any AI in the writing process.

Does that mean I can no longer use a search engine that automatically generates AI-written summaries? What about using tools like Perplexity.ai for research? Is that verboten now?

What about Word, which has had predictive text for a while? And now that Microsoft has forced Copilot on its users, should I just get rid of it, even though I disable Copilot every time Microsoft Office is updated?

If I hop over to ChatGPT and ask it to help me find a word based on a vague description or to differentiate between, say, "intelligence officer" and "intelligence operative," does that count as using AI in my writing process?

What if someone uses it for marketing purposes, or to write a business plan, or to find the most common tropes in XYZ genre?

In other words, where's the line? No one wants to define it exactly, and therein lies the problem. If an honest author gets caught in the "They used AI!" trap for simply using ChatGPT to look up a word, then we're all fucked.

And again, that's not even the real problem. If authors are required to disclose AI-usage and their stories are then disqualified from eligibility from awards and publication, sooner or later authors are going to stop disclosing the use of these tools, which renders zero tolerance policies into little more than performative handwaving.

To reduce the clutter of AI-slop in submissions and awards piles, lines have to be drawn between what is and what isn't acceptable. Blanket anti-AI policies will backfire in ways that no one can predict, whereas a little explication can go a long way toward maintaining an honest and open system.

Under Pressure

The rise of indie publishing has, in many ways, leveled the playing field, allowing authors who were not a good match for corporate publishers to find an audience on their own. Many indie authors have done exactly that, and good on them.

However, self-publishing has also created a pressure cooker environment for these authors, primarily through the rise of rapid release strategies and algorithmic drive. The demand for new content, especially in genres that do well in Kindle Unlimited (Romance, Urban Fantasy, etc.), has generated an enormous amount of pressure to produce. [3]

To feed hungry audiences, many authors began accelerating their timetables, eventually producing a book every month or two a month, or even one a week. Some did it by hiring ghost writers. Others turned to generative AI.

This is the aspect that no one wants to address: established authors are desperate to remain competitive, while newer authors are desperate to break into the market. Time and again, the suggested remedy is to rapidly publish a long series.

That's terrible advice for all kinds of reasons, not least because most writers cannot balance quality writing and high output. Some can. Most can't.

And even still, the problem isn't pulp, formula, or assembly-line fiction, things that have been around for roughly a century now.

The real problem is that this pressure to produce creates a false sense of urgency, making authors walking every publishing path feel as if the only way to reach their financial goals is more, more, more -- more books, more ads, more social media time -- an issue that arose separately from the development of generative AI.

No wonder we're in the middle of a massive wave of author burnout. We've been told that to succeed, we have to do it all, and we have to do it all right now.

That's not the way it works. Publishing is a long game. Even self-publishing doesn't pay out overnight. Patience is a virtue here, impatience a career-killing sin. There's nothing wrong with slow and steady, says the tortoise to the hare.

What's Really at Stake

If you think ChatGPT and Claude are the beginning and end of AI writing tools, you'd be wrong. As I mentioned previously, tools like Sudowrite and ProWritingAid both depend on generative AI to "help" authors write, and even innocuous looking tools such as Grammarly rely heavily on generative AI.

I know many authors who use these programs without thinking twice about it. It never occurs to them that they may be abdicating their creative agency when they cede authority to generative AI.

The entire point of making art, whether it's literary or commercial fiction, is to engage in the creative process. There's no easy path to creation and few shortcuts between idea and readable fiction. The only way to become a good writer is by reading a lot, learning the craft and mechanics of writing, and through deliberate practice.

That takes time and effort. Hours, really, over decades of one's life; hours of studying great literature and craft guides, hours of figuring out one's writing process, hours more to write and rewrite, to edit and revise, and to learn how to effectively incorporate themes, archetypes, and tropes.

Moreover, it takes hours to develop a unique presence on the page, that elusive trait called "voice." ChatGPT and Claude may generate readable text, but they cannot replicate authentic emotion because they're simulations, nothing more. Their voice is the voice of millions of words randomized until they form safe, sycophantic reassurances. It's knockoff, bargain basement creativity, a hollow imitation of the real deal.

There's no substitute for genuinely engaging in the creative process, for struggling with the plot and the prose until one finds the heart of the story. The struggle is the point, those hours of learning and practicing and thinking and doing. Without that, there is no story.

The Competitive Edge

How, then, can authors compete in a market chock full of AI slop and formulaic tripe?

The answer is hard and simple and incredibly obvious all at once: by being authentically human.

I'm not telling anyone to give up their technology, whatever it may be, just advising writers to use their tools in a way that strategically amplifies their process rather than outsourcing their creativity and agency within the process. Learn how to craft a good sentence so that you know when to tell Grammarly to fuck off. Understand plot and structure so that when you get feedback -- whether from a writer's group, beta readers, or ChatGPT -- you know whether it's valid or wildly inaccurate.

In other words, retain authority over your own work and let it stand as a fair representation of who you are as an author.

Many writers are terrified that they'll lose their jobs to AI in one, five, or ten years, but this isn't so. There will always be a demand for human-written stories so long as humans are around to read them.

However, fiction that can be replaced by AI-generated content will be replaced by AI-generated content. The barbarians are at the gate, not because the creative arts are disappearing, but because the market always prioritizes cheaper substitutes for assembly line products. The only way authors can survive is, as Becca Syme says, to "stand out and be memorable and excellent" and to be uniquely human regardless of one's tools.

Become irreplaceable and irreproducible. That's the way forward.

Here's the Line

Using ChatGPT to find plot holes is not the same thing as using it to generate an entire story, and that is, whether they intended it or not, the impression that editors and publishers are giving authors when they implement "no AI" policies.

The line should not have embracing AI on one side and total abstinence on the other. That's an increasingly impossible choice, given the way AI is overtaking nearly every aspect of our digital lives.

The real line is between automation and authorship, between creative abdication and creative ownership. These are the things that matter, not which tools we choose to incorporate into our writing process. It's not an easy line to draw, or even a particularly safe one, but that doesn't excuse not drawing a line at all.

Footnotes:

- Seriously, what exactly does desperation smell like? Yeah, I don't know either.

- This is not a name-and-shame post. I mention Joanna by name because she's been an advocate of embracing responsible AI usage since 2016 and has gathered a ton of resources on AI tools for writers.

- The heading "Under Pressure" was inspired by the 1981 song of the same name by Queen and David Bowie, which seems rather appropriate, given the topic at hand.